User testing the Digital Memory Database

Over the past five months, the Landecker Digital Memory Lab research team have been user testing our flagship resource – the Digital Memory Database – containing a collection of global digital Holocaust memory practice.

By Dr. Kate Marrison and Dr. Ben Pelling

In April 2025, the Digital Collective Memory Platform provided the perfect space for us to kick off a series of user testing sessions designed to test and gather feedback on the living-database archive. Joining online, trusted colleagues and friends working in the field of digital memory were the first to be introduced to the wire-framed version of the database in its alpha state.

Listening to their feedback, we further developed the site (as discussed below) and our inaugural Connective Holocaust Commemoration Expo marked the official beta-launch where we showcased the database to a wide range of academics, educators, filmmakers, digital creatives and heritage professionals from more than 30 countries across the Middle East, Latin America, North America, Europe and Asia. This was immediately followed by a workshop at the biennial Max and Hilde Kochmann Summer School for PhD students in Modern European-Jewish History and Culture hosted by the Sussex Weidenfeld Institute of Jewish Studies in cooperation with the Centre for Jewish Studies, University of Graz and the European Association of Israel Studies (EAIS).

Summer School students user testing. photo credit: Steve Wang

Next came the British and Irish Association for Holocaust Studies “Contexts of the Holocaust” annual conference held at the University of Kent where we hosted another workshop with experts in Holocaust history, memory and education. More testing followed in July with memory scholars and creative practitioners at the Memory Studies Association’s “Beyond Crises: Resilience and (In)Stability” annual conference hosted by Charles University and the Czech Academy of Sciences in Prague. Read more on our reflections of the event.

“This is a gift to us all” – Alpha testing session feedback from a participant working in Holocaust heritage and education.

User testing design

To engage with an iterative development process, we ran multiple sessions throughout the year. These sessions were designed with both qualitative and quantitative methods in mind. Divided into two parts, workshop participants were given an example user case study to prompt their activity, encouraging them to start in different places upon entering the site. Some were given the personas of a researcher gathering examples of digital practice, others took on the role of heritage professionals looking for examples of site-based augmented reality applications. Whereas another group of testers started with a collection of assets containing both digital walkthroughs of projects and interviews with those who created them.

Colleagues were then given 50 minutes to freely explore the site before coming together for a focus group discussion.

The discussion centred on the following points:

- Usability and interactivity

- Functionality

- Searchability

How we responded to the feedback from these sessions is discussed below.

Alongside and in support of the qualitative user testing, we also carried out some automated tracking of how our test users interacted with the database. This consisted of heatmaps and recording sample user journeys. All tracking was aggregated and anonymised to preserve user confidentiality.

Having reviewed a range of applications and code bases, ContentSquare was selected as the most suitable tool. Once a testing session was complete, the ContentSquare dashboard provided us with a range of aggregated visualisations. The two most significant for us were:

- The ‘Scroll’ heatmap: This shows how far users have scrolled down a given webpage and so provides an insight as to which areas of the page are most likely to be viewed. As is common with many websites, the top section of the page was the most likely to be viewed by the users.

- The ‘Clicks’ heatmap: This shows an aggregated ‘map’ of the areas on the page that were most clicked on. This in turn allows us to see which were the most used buttons and links and also which may have gone unnoticed by the users.

‘Scroll’ heatmap

‘Click’ heatmap

Additionally, user movements through the database are also captured by the ContentSquare tool. Watching back a sample of these animations was useful in assessing where elements of a page may have been missed or misunderstood.

Clip of user journey through the database

Note – the finalised live site will not include tracking of individual users and so the ContentSquare software has only been used during the user testing phase of the database build.

Responding to the feedback

The data gathered via this automated method was useful in two ways. Firstly, as we ran multiple testing sessions, we were able to compare the navigation, search and visualisations as the design and development of the database progressed from wireframe to advanced functionality. Secondly, we combined the automated tracking data with the verbal and written responses. This provided a more rounded picture of the user experience and helped us to understand any usability issues that they encountered.

During the alpha-testing, several participants found navigation of the database via the initial basic globe map to be confusing. To improve this, we replaced the background globe graphic and updated the location markers to indicate how many projects related to each location. In addition, we added a time slider to allow for filtering based on the start and end of projects.

We also received feedback on expanding the search functionality – which at the alpha stage only covered curated keyword tags. From this we developed a more in-depth full-text search capability of the hosted interview transcripts.

While these changes were well received during the beta-testing series, we received additional feedback that informed further advances of the database throughout the summer.

For example, several academics and researchers expressed a desire to be able to curate and annotate searches, audio clips, and data visualisations. In response we have expanded the personal user space to include advanced tools that provided this functionality.

In listening to our beta-testers we have also added additional functionality that takes advantage of our indexed keywords and metadata – developing an historical mapping filter, natural language search, and networked thematic diagrams.

Collating the responses across all sessions and the results of the questionnaire, we created an internal report which guided our database design meetings moving forward. This feedback proved invaluable as we continued to develop the platform and finesse its features ready for our final testing stage to come.

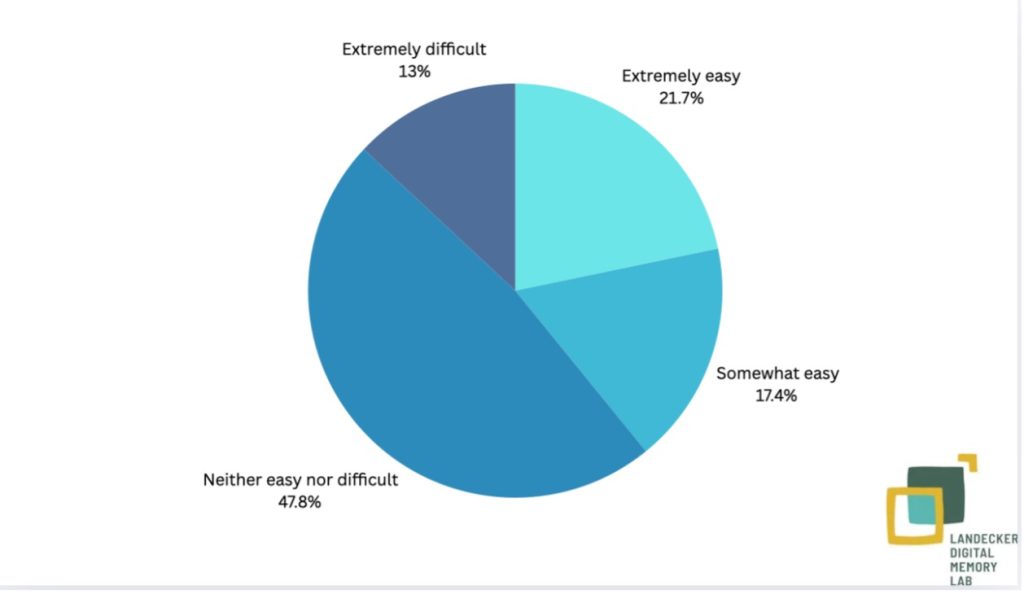

Example chart from internal report on user feedback regarding our audio-visual clipping tool

Next steps

Thus, to ensure rigorous review of these new features, we are extending our testing sessions to Beta 2.0 and running another round of workshops throughout Autumn.

We are grateful to all who have participated in our user testing so far – we want to continue to develop the database with these communities rather than just for them.

You can be part of our final online user testing session this November. We particularly welcome those who participated in earlier rounds, but everyone from our target user groups is welcome (Holocaust heritage/education professionals, academics, and creative/ tech professionals). More details and the link to register for free are available here.